Introduction

Research from McKinsey shows that while AI adoption is widespread, most enterprises remain stuck in experimentation. As of late 2025, 88% of organisations report using AI in at least one business function, yet the majority are still in pilot or early-stage deployments. Although 64% of leaders believe AI enables innovation, only 39% report measurable Earnings Before Interest and Taxes (EBIT) impact at the enterprise level, highlighting a growing gap between intent and outcomes.

MIT research reinforces this gap. Nearly 95% of generative AI pilots fail to deliver meaningful business impact, not because of model limitations or lack of talent, but due to difficulties integrating AI into existing workflows, systems, and decision structures. The breakdown occurs when pilots attempt to move into production, where organisational complexity begins to dominate.

Taken together, these signals suggest that the challenge is no longer building AI capability, but integrating AI into live systems. This blog examines the leadership traps that surface during integration, the second-order effects often underestimated, and why AI integration proves harder than cloud adoption, microservices, or earlier waves of digital transformation.

The 7 AI Integration Traps

The traps that follow are not theoretical. They do not appear during pilots. They surface when AI is integrated into production systems. Each trap reflects a recurring pattern seen in large enterprises where AI capability exists, but value fails to follow. The purpose of this section is not to list best practices, but to help leaders recognise where integration decisions quietly undermine scale, stability, and return on investment.

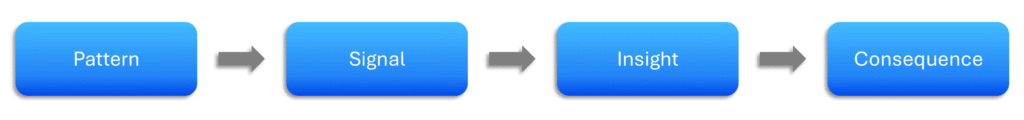

Figure-01: Recognising Integration Traps

This blog does not propose fixes, as the same trap can require different responses depending on context. The harder and more valuable task for leaders is recognising the problem correctly before choosing a solution.

Disruptive Integration

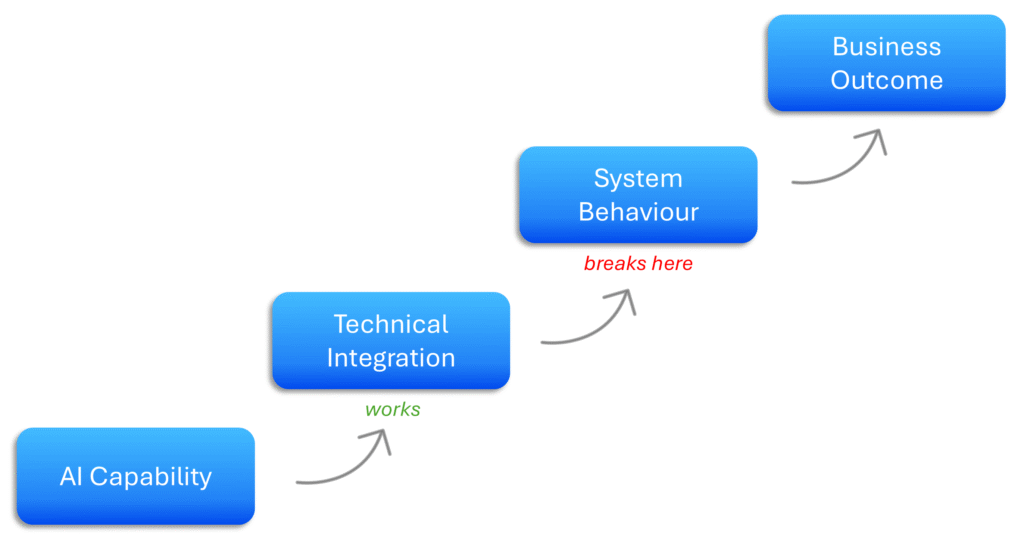

Over the last two decades, organisations have invested heavily in data-centre migration, cloud adoption, and digital transformation. Since 2023, AI has become inevitable at the enterprise level. As a result, the immediate question many leaders ask is how quickly AI can be integrated into existing production systems. In my conversations with senior leaders, rapid integration consistently emerges as a top priority. Integration is often treated as a technical exercise, focused on connecting AI to legacy platforms, fragmented data flows, and loosely coupled upstream and downstream systems.

“AI integration cannot be approached the same way as cloud adoption or digital transformation”

The real disruption surfaces after deployment. While AI-enabled solutions often work as expected in test environments, production exposes how end-to-end systems actually behave at scale (McKinsey). Exceptions increase, manual interventions reappear, and outputs become inconsistent across workflows. At this point, leaders realise the issue is not AI capability, but how AI has been integrated into legacy systems and existing business processes (Gartner). By that stage, teams are spending more time managing the side effects of integration than realising any meaningful value.

Figure-02: Where Integration Breaks

Decision Ownership Gaps

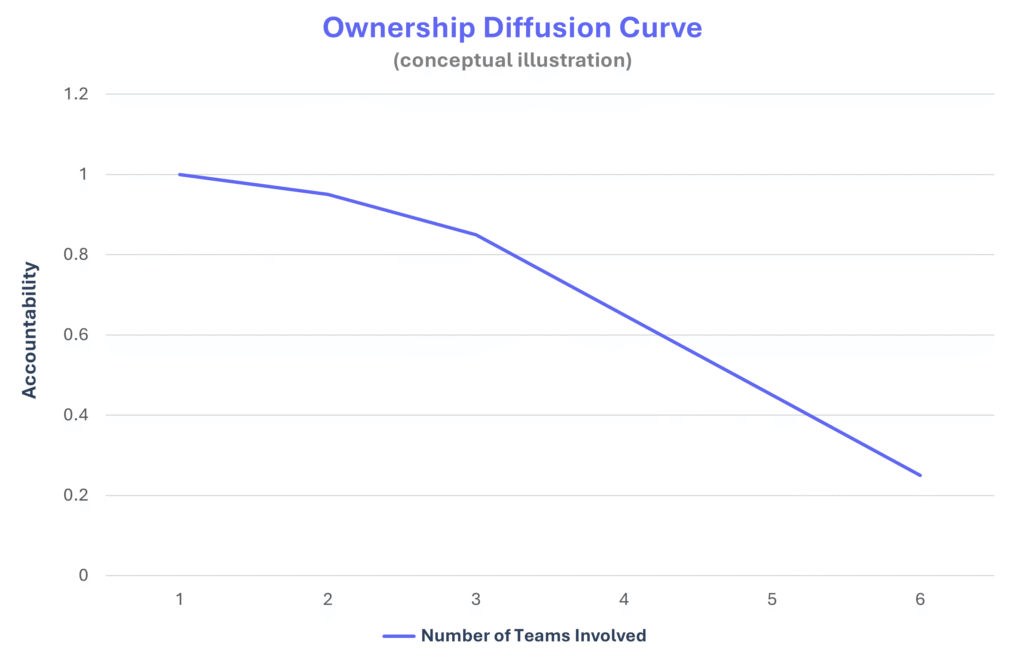

Ownership rarely sits with one team in AI initiatives. Technical teams build and deploy AI, while budgets, risk, and value sit with business units. As a result, responsibility for outcomes is split across functions. This gap between who delivers the system and who owns the result is one of the earliest cracks in AI integration, and it is often overlooked at leadership level (IJCA).

The problem becomes clear once AI starts influencing real business decisions. Unlike traditional software, AI cuts across technical execution and business judgement. Decision rights shift, but ownership does not. When something goes wrong, escalation slows because no single owner feels responsible for stepping in. Several teams are involved, yet none clearly owns the outcome.

Figure-03: Accountability vs Teams Involved

Over time, this creates drag. Decisions sit with the wrong owners or wait for alignment that never arrives. Teams optimise for their own goals rather than the overall business result. Confidence in AI drops, not because the technology fails, but because unclear ownership turns AI into friction instead of something the business can rely on (Deloitte).

Feeding Legacy Data Blindly

Data in test environments has always been a challenge in traditional software delivery. Test data often does not reflect production due to constraints such as data privacy, incomplete relationships between data, configuration differences, and limited real-world scenarios. When AI systems are integrated, this issue gets passed on by default. Enterprise research from IBM continues to highlight how data quality gaps directly affect the reliability of AI-driven systems.

When existing and legacy data is fed into the AI layer, the output can look promising. Business and leadership teams begin seeing insights that were not visible earlier. However, once these AI workflows enter production, results often differ from what was tested. Differences between test and production environments, third-party feeds, and changing data conditions begin to surface. One key aspect that often gets ignored is context. The data may be accurate, but it was not originally created to support AI-driven decision-making.

The outcome is that weaknesses in data and relationships between data points get amplified. Outputs become inconsistent and teams begin questioning the results. Trust gradually drops, not because the AI system is failing, but because the underlying data was never designed for the type of decisions now being made.

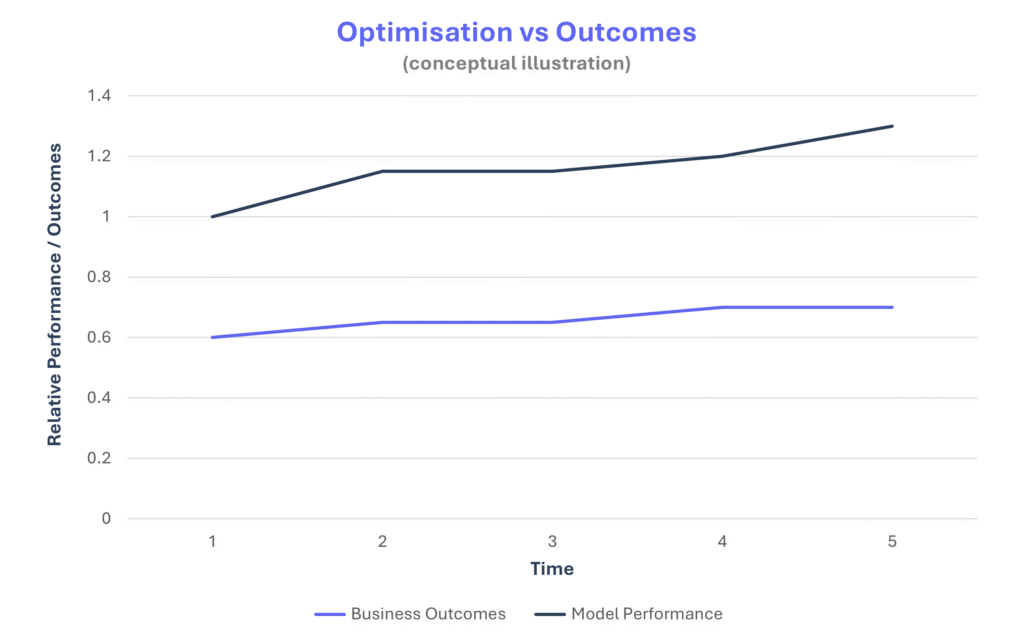

Misaligned Success Signals

Once the AI layer is integrated, the next question for leaders is how to measure success. KPIs become the centre of attention. Reports show reduced effort, improved model accuracy, faster turnaround, and better response times. These are leading indicators. However, lagging indicators such as revenue growth, margin improvement, risk reduction, or strategic impact often remain flat. Dashboards look positive, but the business outcome does not materially change.

Recent industry research shows that only about 5% of organisations achieve meaningful enterprise value from AI at scale. Nearly 60% report little to no material impact despite heavy investment (BCG, 2025).

“Model performance is not business performance”

Teams continue optimising what is measured and rewarded. If model performance and operational efficiency are tracked, they improve. Local optimisation happens at the model level, but system-level performance may not move. Gains are absorbed elsewhere in the workflow. What often remains unmeasured is whether these improvements translate into real business value.

Figure-04: Model Metrics vs Business Impact

Over time, this misalignment creates drift. Capital, leadership time, and organisational focus are spent improving AI metrics, while higher-impact changes are delayed. Reports signal progress, yet financial returns do not follow at the same pace. The issue is not that AI is underperforming, but that success is being defined in a way that does not reflect true business impact.

Ignoring Run-Time AI Costs

Budget allocation for AI cannot stop at implementation. The cost incurred by the organisation eventually flows through the business. Customers, whether B2B or B2C, are unlikely to absorb continuous price increases. Yet AI deployment is often treated like a traditional software release, where costs are front-loaded and expected to taper off once in production.

Unlike traditional systems, AI performance can degrade over time if not actively maintained, requiring ongoing adjustment and oversight. In reality, infrastructure spend, monitoring effort, retraining cycles, and data housekeeping grow steadily once the system is live. Industry research highlights that compute and infrastructure costs rise significantly as AI scales into production (IBM).

“Run-time cost is part of the design, not an afterthought”

AI systems require continuous monitoring, recalibration, and operational support to remain reliable. These costs do not remain static. Over time, operational expenses expand, ROI narrows, and early wins begin to lose financial meaning. What starts as an efficiency gain can turn into sustained overhead, creating pressure on margins and gradual strain on customer trust.

Reactive Governance

Governance is usually taken into account, but it often shows up only as documentation, review sign-offs, and go or no-go approvals before release. Organisations put in place AI policies, ethical principles, compliance checklists, and risk committees. In many cases, these are paper-based controls. They respond to issues after they appear in live systems rather than being embedded in daily operations.

The real gap is in design. AI governance needs to be built into the system itself through things like decision traceability, clear audit logs, human override paths, explainability layers, and defined escalation routes. When these mechanisms are not part of the architecture, governance gets bolted on later as extra controls and manual checkpoints. Over time, this slows execution, creates friction across teams, and turns governance into a constraint instead of an enabler.

“Governance delayed becomes governance that blocks”

For instance, state institutions are already working on the regulatory side of AI. The UK Government has released an AI Playbook that focuses on responsible deployment and building governance into systems, not just documenting policies. This shows that governance is expected to be part of system design and operations, not something added later after issues arise.

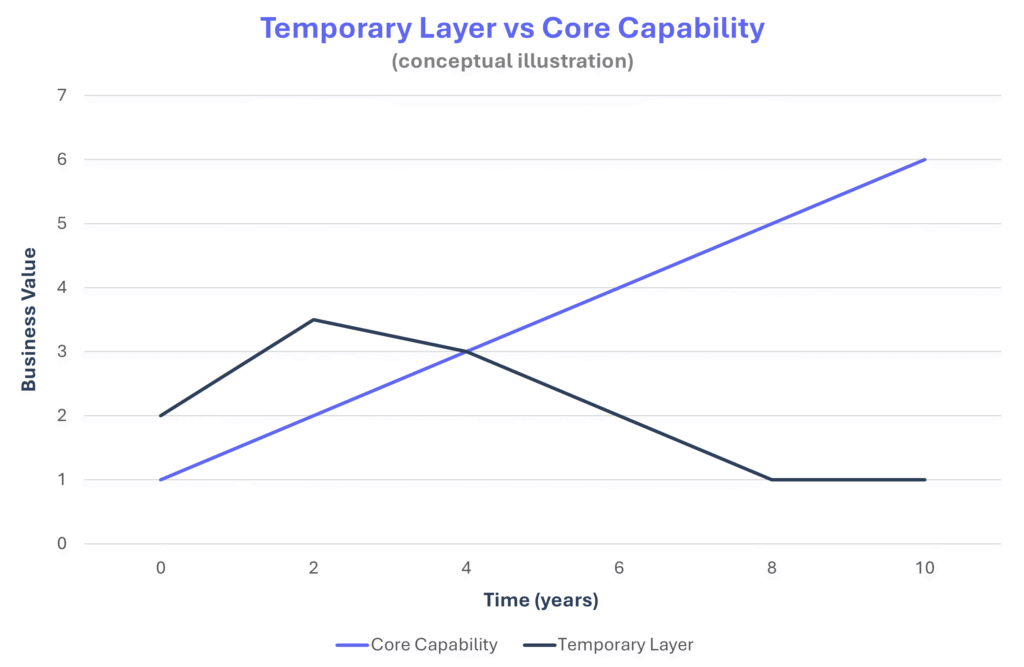

Treating AI as a Temporary Layer

Many organisations treat AI integration as a large, one-time project. Whether introduced as an add-on feature or an optional layer over existing systems, the effort is seen as a milestone. Once the AI-enabled solution moves into maintenance and support, it is expected to run with its original capability and minimal change. Over time, the system remains static while business conditions, data patterns, and customer expectations continue to evolve. As a result, the perceived business value begins to decline.

Figure-05: Impact Trajectory Over Time

AI components cannot remain fixed if they are expected to deliver sustained impact. Without continuous refinement of models, data inputs, workflows, and feedback loops, performance and relevance gradually drop. Plug-and-play APIs or models trained once in-house rarely hold their value in the long run. AI needs to be treated as an evolving capability, not a completed project. When it is not, organisations believe they have “done AI” while the competitive advantage quietly fades.

Conclusion

AI capability is no longer the constraint. Most enterprises can access strong models, launch pilots, and even deploy into production. The real difficulty begins after deployment, when integration exposes structural weaknesses in ownership, data, governance, cost models, and how success is measured.

These traps are rarely technical failures. They are leadership blind spots. They surface at scale, not in experimentation. When results disappoint, attention often turns to the model, while the deeper issue sits in the operating structure around it.

Recognising these patterns early does not slow AI adoption. It prevents quiet erosion of value after release. AI integration is not a milestone or a one-time transformation. It is an ongoing leadership responsibility.